In the article “AWS responds to Anthos and Azure Arc with Amazon EKS Anywhere“, VTI Cloud mentions promising new updates of Amazon Web Services (AWS) related to containers. One of the services centrally focused on by AWS in 2021 is the Amazon Elastic Container Service (Amazon ECS).

This article is an overview for beginners with Amazon Elastic Container Service (Amazon ECS). VTI Cloud will refer to concepts, core terms, simple architectural diagrams, and summary examples.

First of all, we have to learn about Docker

To understand Amazon ECS, we must first understand the concept of Docker.

Docker – a run time container is a software – a tool that allows building, deploying, packaging applications easily and quickly compared to the previous virtualization architecture. Containers run by Run Time Containers such as Docker are a kind of fully packaged software standard library, the source code required to run applications with high stability, availability without concern for physical infrastructure. Many different containers can be run on a host, as long as the host has Docker software installed.

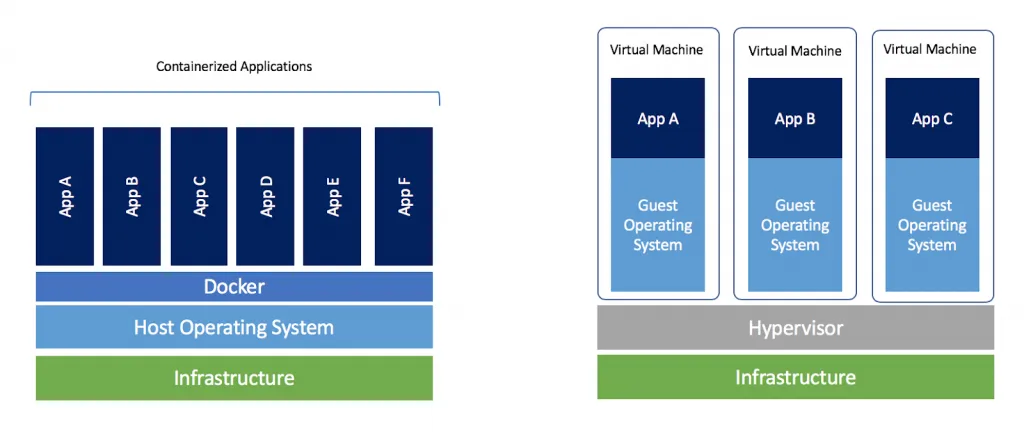

Compare Docker and Virtualization

As you can see in the diagram above, Docker is different from normal Virtualization. Docker is located between the application and the server operating system (OS). Docker can share the host operating system on multiple “Containers” instead of asking each person to have and run the operating system separately.

Each container is full of what an application needs – for example, certain versions of a language or library – and no more than it needs. Many containers can be used for different parts of the application if you wish and they can be set up to communicate with each other as needed.

This allows packing its application into a reusable module, which can run on any machine available. This enables more detailed resource allocation and can minimize the amount of CPU or memory resources wasted.

By using the docker container specified to run production code, make sure that the dev and production environment are the same.

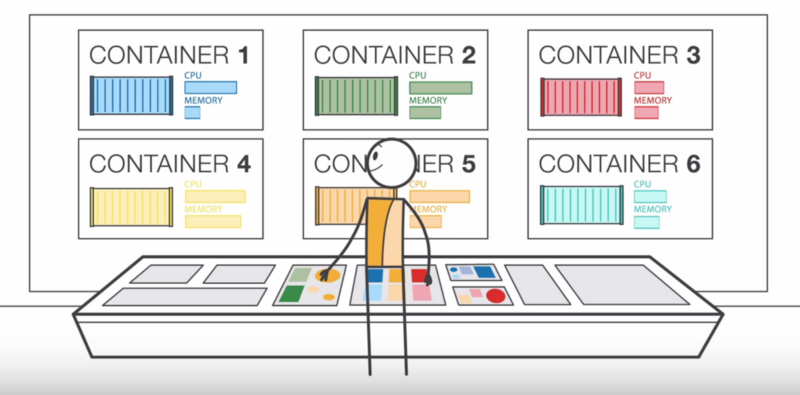

When the number of containers expands too fast

As your application grows, managing deployment, structure, schedule, and expansion of containers for applications quickly becomes very complicated. This is when the container management service was born. It aims to allow simple configuration options and handle non-professional work while you focus on application programming.

Learn about Amazon ECS

Amazon Elastic Container Service (Amazon ECS), as defined by AWS is

A highly scalability container management service that easily runs, stops, or manages docker containers in a cluster. You can host a serverless infrastructure by running a service or task using Fargate launch type or using EC2 launch type to run EC2 instances.

Amazon ECS has been compared to Kubernetes, Docker Swarm, and Azure Container Instances.

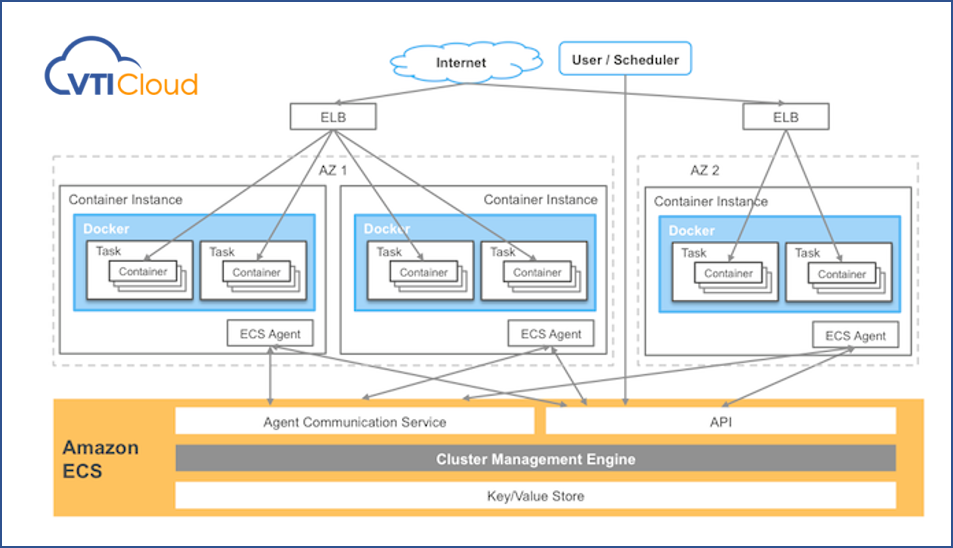

Amazon ECS runs containers in clusters of many Amazon EC2 instances pre-installed with Docker. It handles container installation, scalability, monitoring, and management of these instances (launch/stop) through both APIs and AWS management consoles.

Amazon Elastic Container Service enables to simplify the view of EC2 instances into a resource pool, such as CPU and memory.

You can use Amazon ECS to install containers through clusters and rely on the resources you need, independent policies, or the ability to change. With Amazon ECS, you don’t have to operate your own cluster management and configuration management system or worry about expanding your management infrastructure.

Amazon ECS is a region-based service that simplifies runs container applications across multiple A’s in the same region. You can create an ECS cluster inside a new or old VPC. After a cluster is initially and run, you can define the task and services that it defines the Docker container image that will run through clusters.

Amazon ECS core terminology and architectural diagrams

Here we come to two new groups of terms:

-

Task Definition, Task, và Service.

-

ECS Container Instance, ECS Container Agent, và Cluster.

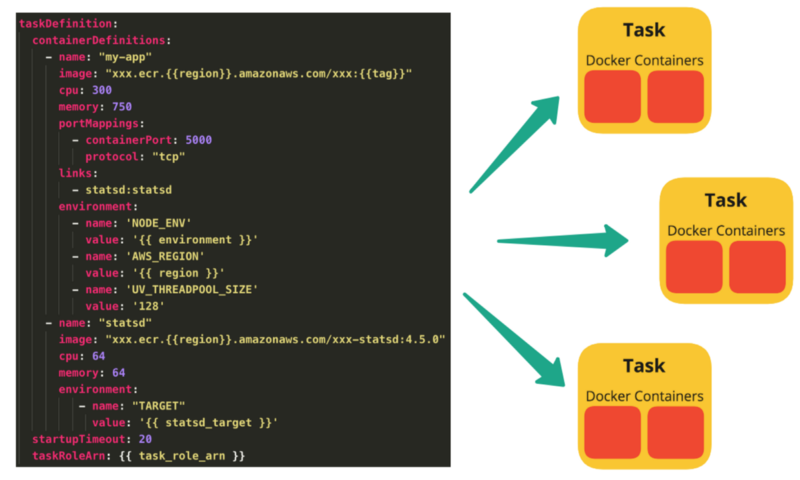

Task Definition

This is a text file (JSON format). It will describe 1 or more containers (up to 10) to form your application. Task definition will specify a few parameters for the application, such as which container will be used, image used, launch type used, the configuration of the container (CPU and memory)), open port, and what data volume will be created with the container in the task …

The parameter in the task definition depends on which launch type is being used. An example of a task definition that contains a container used to run an NGINX web server that uses the Fargate launch type.

{

“family”: “webserver”,

“containerDefinitions”: [

{

“name”: “web”,

“image”: “nginx”,

“memory”: “100”,

“cpu”: “99”

},

],

“requiresCompatibilities”: [

“FARGATE”

],

“networkMode”: “awsvpc”,

“memory”: “512”,

“cpu”: “256”,

}

Task and Schedule

A task is the initial creation of a task definition inside the cluster. Many different Tasks can be created by a Task Definition, depending on your needs, but specify a certain number of tasks. However, these tasks may be the same.

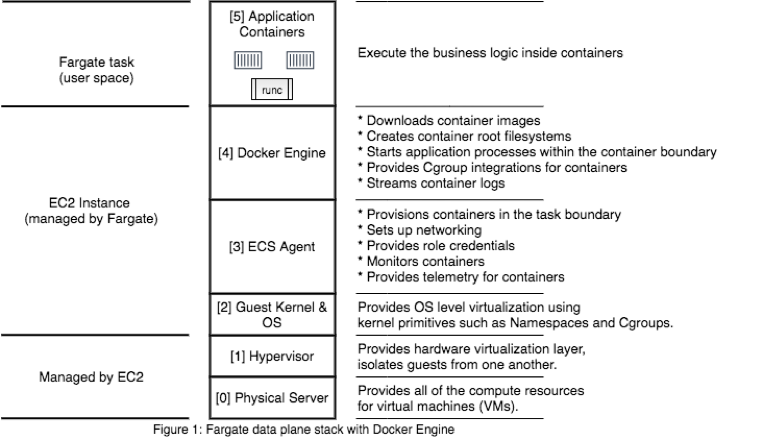

For each task using the Fargate launch type, there is a separate boundary and does not share kernel, CPU resources, memory, or elastic network interface with other tasks.

Amazon ECS task scheduler is responsible for replacing tasks inside the cluster. There are a few different ways to schedule a task

-

Service schedule

-

Manually running task

-

Running task on a cron-like schedule

-

Custom scheduler

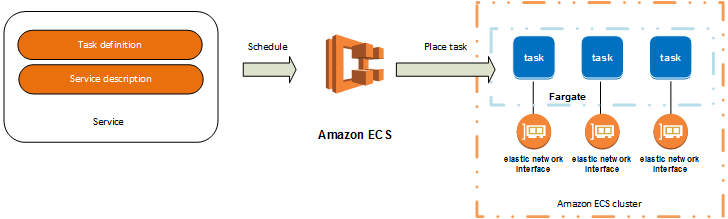

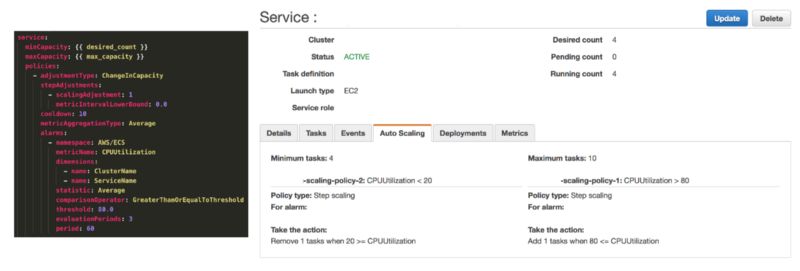

Service

Determine the minimum and maximum of one or more Tasks from a Task Definition that runs at any certain time.

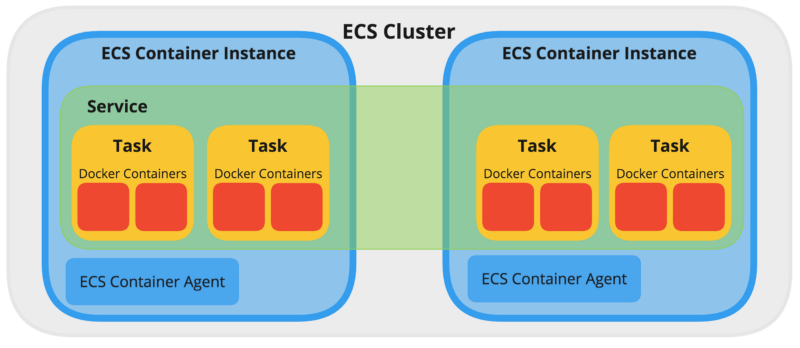

Now that service is available, Service Tasks need to be run somewhere to be accessible. It needs to be placed on a Cluster and the container management service will handle it when running on one or more ECS Container Instance(s).

Amazon ECS Container Instances and Amazon ECS Container Agents

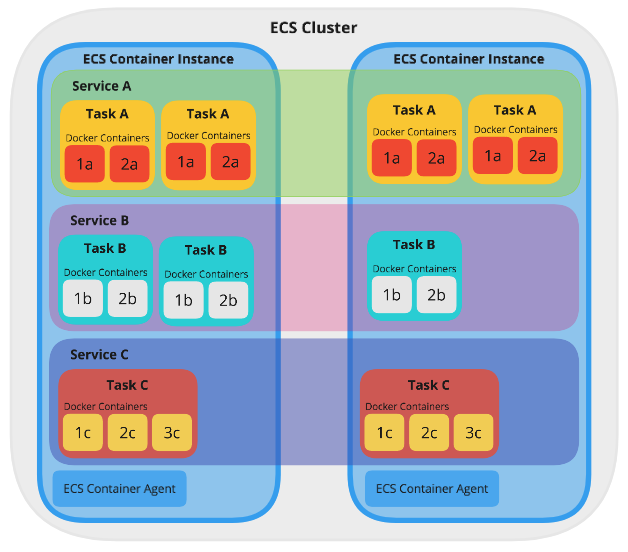

In the image below is an EC2 instance that has a docker engine installed and an ECS Container Agent inside. An ECS Container Instance can run multiple Tasks of the same or different breed, from the same or different Services.

The agent is used to support exchange connections between ECS and instances, provide information about running containers, and manage newly created containers.

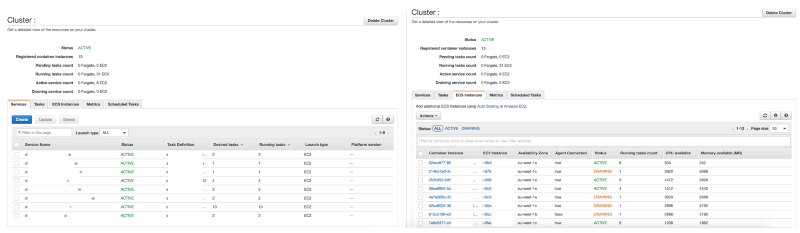

Cluster

As seen above, a Cluster is a group of ECS Container Instances. Amazon ECS handles the logic of scheduling, maintaining, and processing scalability requests for these instances. Tasks running on ECS are always in the cluster.

When tasks are run on Fargate, cluster resources are managed by Fargate. When using the EC2 launch type, clusters are ECS Container Instance groups (powered by EC2 instances).

See more on Cluster in AWS Documentation:What is Amazon Elastic Container Service? – Amazon Elastic Container Service

A Cluster can run multiple Services. If your product has multiple apps, then you can put some of these apps in a Cluster. This makes it more efficient to use available resources and minimizes setup time compared to traditional management models.

Amazon ECS loads your image containers from the registry you set up earlier and then runs these images in your cluster:

-

Cluster there Region-specific.

-

Cluster can contain many tasks using both Fargate launch type and EC2 launch type.

-

When tasks use EC2 launch type, clusters can contain many different ECS Container Instances. At the same time, an ECS Container Instance belongs to only one cluster.

-

You can create a custom IAM policy for cluster enabled or limit user access to clusters.

EC2 launch type use cases in Amazon ECS

-

The workload is big, but still wants to save costs

When an application needs a high CPU and multiple GB of memory and an optimal desire for AWS costs, it is possible to use clusters of reserved EC2 instances or EC2 spot instances.

You’ll be responsible for maintaining and optimizing this cluster, but the advantage of Amazon EC2 will save quite a bit of cost (when using spot or reserved instance)

Cases of using Fargate launch type in Amazon ECS

-

Test environment

With testing environments that don’t require too much workload, using AWS Fargate serverless services instead of EC2 instance services will help you get the most out of your resources.

-

The workload environment is small, but occasionally there is a sudden increase (burst)

When workloads are small but occasionally have occasional spikes, such as traffic or requests unexpectedly increasing during the day and low at night, using the Fargate launch type will be more advantaged.

Because Fargate can scale up resources from a tiny night container (extremely low cost) for responding to daytime spikes that simply pay for CPU core and GB of on-demand memory.

-

Large workload, but does not want to consume resources for operation (Overhead cost)

Operating a cluster of multiple EC2 instances takes a lot of effort and time, as you must ensure the latest patching, security, and update of docker and ECS Agent instances. At this point, Fargate would be the best option!

The reason is that this serverless service is automatically protected by AWS engineers behind and patches and updates from AWS infrastructure without businesses bothering.

-

Periodical workload

When the system always has to run tasks periodically, such as cron jobs (hourly or occasionally) from the queue, AWS Fargate will provide the most stable support for the system. Instead of having to restart ec2 instances after these tasks while running and still being charged when EC2 instances are turned off, Fargate is only charged while in run mode.

Conclusion

We’ve seen how an application running on Docker can be performed using Task Definition and has a 1:1 relationship with a Service, thereby using Task Definition to create a variety of Task instances.

The service is deployed to a Cluster of ECS Container Instances, providing the pool of resources needed to run and extend the application. Additional Services can be deployed to the same Cluster.

Amazon ECS, or any container management service, will aim to simplify as much as possible, eliminating many of the complexities of operating infrastructure at scale.

As the needs of the system become more complex, container management services will ensure easy management of the system. By using APIs or Management Consoles, you can come up with Task definitions to add new ECS Container Instances as needed. This ensures that there are always a number of Tasks running and all alluring resources intelligently across the Services.

The case study of Deliveroo that leverages the Amazon ECS’s benefits are shown at this link: Deliveroo – Reduce operating costs by more than 50% with AWS | VTI CLOUD

Reference:

-

A beginner’s guide to Amazon’s Elastic Container Service (freecodecamp.org)

-

What is Amazon Elastic Container Service? – Amazon Elastic Container Service

About VTI Cloud

VTI Cloud is an Advanced Consulting Partner of AWS Vietnam with a team of over 50+ AWS certified solution engineers. With the desire to support customers in the journey of digital transformation and migration to the AWS cloud, VTI Cloud is proud to be a pioneer in consulting solutions, developing software, and deploying AWS infrastructure to customers in Vietnam and Japan.

Building safe, high-performance, flexible, and cost-effective architectures for customers is VTI Cloud’s leading mission in enterprise technology mission.